Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

Tangentially topical, here’s a startup (of course) who offers CNC-milled marble statuary:

Bloomberg: This Startup Will Make a Sculpture of Your Dog for $10,000

Via this HN thread https://news.ycombinator.com/item?id=41248112

Not really TESCREAL, more really really late stage capitalism.

Although weirdly I’d be up for one of those really cool late Roman Republic realist busts.

might involve some amount of hubris you say…

This really opened my eyes to some historical context I never thought of before.

My initial gut reaction was judgmental about the way billionaires spend their money; thinking it might involve some amount of hubris.

Then I realized I have no idea of how sculpture that are now show in museums as treasured historical art pieces were judge in the time they were created. Today we treasure them. But what did the general population think of them? I have no idea.

I imagine that at the time of their commissioning they were also paid by affluent people that could afford such luxuries. People that probably mirror today’s billionaires in influence and access. So what’s different about these?

Tech guy can’t figure out the difference between kitsch and actual art, what a shock.

brain throbbing furiously hang on… if artists produced what we say is moving and novel work WHILE wealthy people threw money at whatever bullshit they wanted, that must mean wealthy people throwing money at whatever bullshit they want is good

It’s amazing to watch them flock together like this, nature is beautiful 😍

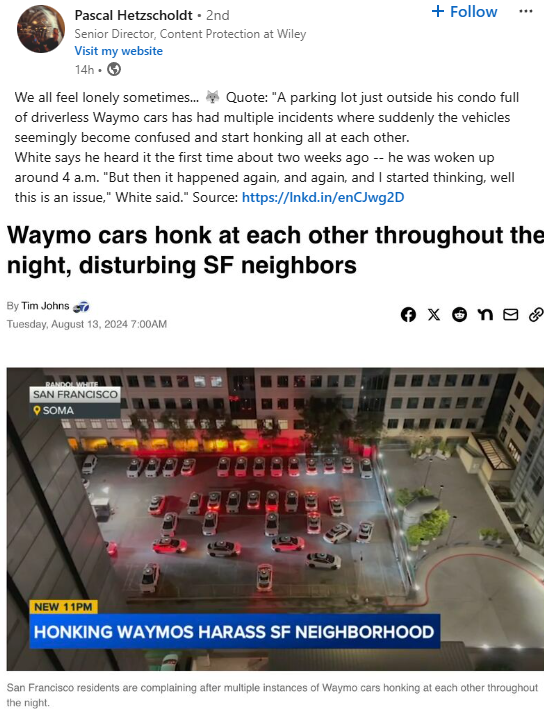

I used to wonder if they had thought about deadlock/livelock re self driving cars. Thanks to modern technology I no longer have to wonder. Thanks!

saw a video of this yesterday, that “honk” title extremely understates how fucking dumb the problem is

in the video I saw, those dumb-ass things are literally crawling forward and back in the parking lot, because the one in front of it is also doing it, because…

yes, a multi-car movement deadlock, with a visually clear solution (which any human driver would be able to implement in seconds) that nonetheless still doesn’t happen because….? I guess waymo didn’t code in inter-car communication or something

seriously, find a copy and watch. it’ll give a lovely kicker to your day :>

Oh this sounds like a dog I used to have as a kid! They needed more enrichment during the day or else she’d bark into the void all night and get super excited when another dog barked back.

Have they tried taking the waymos out for walkies?

This is what happens in the absence of a natural predator.

No joke but actually yes?

Looks like an opportunity for the DoT to introduce a new breeding colony of traffic cones to the area!

this will probably become a NotAwfulTech post after I explore a bit more, but here’s a quick follow-up to my post last stubsack looking for language learning apps:

the open source apps for the learning system I want to use do exist! that system is essentially an automation around reading an interesting text in Spanish (or any other language), marking and translating terms and phrases with a translation dictionary, and generating flash cards/training materials for those marked terms and phrases. there’s no good name for the apps that implement this idea as a whole so I’m gonna call them the LWT family for reasons that will become clear.

briefly, the LWT family apps I’ve discovered so far are:

- LWT (Learning With Texts) is the original open source system that implemented the learning system I described above (though LWT itself originated as an open source clone of LingQ with some ideas from other learning systems). the Hugo Fara fork is the most recently-maintained version of LWT, but it’s generally considered finished (and extraordinarily difficult to modify) software. I need to look into LWT more since it’s still in active use; I believe it uses an Anki exporter for spaced repetition training. it doesn’t seem to have a mobile UI, which might be a dealbreaker since I’ll probably be doing a lot of learning from my phone

- Lute (Learning Using Texts) is a modernized LWT remake. this one is being developed for stability, so it’s missing features but the ones that exist are reputedly pretty solid. it does have a workable mobile UI, but it lacks any training framework at all (it may have an extremely early Anki plugin to generate flash cards)

- LinguaCafe is a completely reworked LWT with a modern UI. it’s got a bunch of features, but it’s a bit janky overall. this is the one I’m using and liking so far! installing it is a fucking nightmare (you have to use their docker-compose file only, with docker not podman, and absolutely slaughter the permissions on your bind mounts, and no you can’t fire it up native) but the UI’s very modern, it works well on mobile (other than jank), and it has its own spaced repetition training framework as well as (currently essentially useless) Anki export. it supports a variety of freely available translation dictionaries (which it keeps in its own storage so they’re local and very fast) and utterly optional DeepL support I haven’t felt the need to enable. in spite of my nitpicks, I really am enjoying this one so far (but I’m only a couple days in)

you have to use their docker-compose file only, with docker not podman, and absolutely slaughter the permissions on your bind mounts, and no you can’t fire it up native

yeah I have no idea what any of these words mean

singe marks from where the curses landed

speaking of

one of my endeavours the last few days (although heavily split into pieces between migraines and other downtimes) was to figure out how to segment containers into vlan splits (bc reasons), and doing this on podman

the docs will (by omission or directly) lie to you so much. the execution boundaries of root vs rootless cause absolutely hilarious failure modes. things that are required for operation are

Recommendedpackages (in the apt/dpkg sense)utter and complete clownshow bullshit. it does my head in to think how much human time has been wasted on falling arse-over-face to get in on this shit purely after docker ran a multi-year vc-funded pr campaign. and even more to see, at every fucking interaction with this shit, just how absolutely infantile the implementations of any of the ideas and tooling are

handy to know about :)

Post from July, tweet from today:

It’s easy to forget that Scottstar Codex just makes shit up, but what the fuck “dynamic” is he talking about? He’s describing this like a recurring pattern and not an addled fever dream

There’s a dynamic in gun control debates, where the anti-gun side says “YOU NEED TO BAN THE BAD ASSAULT GUNS, YOU KNOW, THE ONES THAT COMMIT ALL THE SCHOOL SHOOTINGS”. Then Congress wants to look tough, so they ban some poorly-defined set of guns. Then the Supreme Court strikes it down, which Congress could easily have predicted but they were so fixated on looking tough that they didn’t bother double-checking it was constitutional. Then they pass some much weaker bill, and a hobbyist discovers that if you add such-and-such a 3D printed part to a legal gun, it becomes exactly like whatever category of guns they banned. Then someone commits another school shooting, and the anti-gun people come back with “WHY DIDN’T YOU BAN THE BAD ASSAULT GUNS? I THOUGHT WE TOLD YOU TO BE TOUGH! WHY CAN’T ANYONE EVER BE TOUGH ON GUNS?”

Embarrassing to be this uninformed about such a high profile issue, no less that you’re choosing to write about derisively.

Surely this is 3 or 4 different anti-gun control tropes all smashed together.

This came up in a podcast I listen to:

WaPo: "OpenAI illegally barred staff from airing safety risks, whistleblowers say "

archive link https://archive.is/E3M2p

OpenAI whistleblowers have filed a complaint with the Securities and Exchange Commission alleging the artificial intelligence company illegally prohibited its employees from warning regulators about the grave risks its technology may pose to humanity, calling for an investigation.

While I’m not prepared to defend OpenAI here I suspect this is just to shut up the most hysterical employees who still actually believe they’re building the P(doom) machine.

Short story: it’s smoke and mirrors.

Longer story: This is now how software releases work I guess. Alot is running on open ai’s anticipated release of GPT 5. They have to keep promising enormous leaps in capability because everyone else has caught up and there’s no more training data. So the next trick is that for their next batch of models they have “solved” various problems that people say you can’t solve with LLMs, and they are going to be massively better without needing more data.

But, as someone with insider info, it’s all smoke and mirrors.

The model that “solved” structured data is emperically worse at other tasks as a result, and I imagine the solution basically just looks like polling multiple response until the parser validates on the other end (so basically it’s a price optimization afaik).

The next large model launching with the new Q* change tomorrow is “approaching agi because it can now reliably count letters” but actually it’s still just agents (Q* looks to be just a cost optimization of agents on the backend, that’s basically it), because the only way it can count letters is that it invokes agents and tool use to write a python program and feed the text into that. Basically, it is all the things that already exist independently but wrapped up together. Interestingly, they’re so confident in this model that they don’t run the resulting python themselves. It’s still up to you or one of those LLM wrapper companies to execute the likely broken from time to time code to um… checks notes count the number of letters in a sentence.

But, by rearranging what already exists and claiming it solved the fundamental issues, OpenAI can claim exponential progress, terrify investors into blowing more money into the ecosystem, and make true believers lose their mind.

Expect more of this around GPT-5 which they promise “Is so scary they can’t release it until after the elections”. My guess? It’s nothing different, but they have to create a story so that true believers will see it as something different.

Yeah, I’m not in any doubt that the C-level and marketing team are goosing the numbers like crazy to keep the buuble from bursting, but I also think they’re the ones that are most cognizant of the fact that ChatGPT is definitely not the Doom Machine. But I also believe they have employees who they cannot fire because they would spread a hella lot doomspeak if they did, who are True Believers.

I also believe they have employees who they cannot fire because they would spread a hella lot doomspeak if they did, who are True Believers.

Part of me suspects they probably also aren’t the sharpest knives in OpenAI’s drawer.

It can be both. Like, probably OpenAI is kind of hoping that this story becomes wide and is taken seriously, and has no problem suggesting implicitly and explicitly that their employee’s stocks are tied to how scared everyone is.

Remember when Altman almost got outed and people got pressured not to walk? That their options were at risk?

Strange hysteria like this doesn’t need just one reason. It just needs an input dependency and ambiguity, the rest takes of itself.

Well, it’s now yesterday’s tomorrow and while there’s an update I’m not seeing a Q* announcement.

Q*

My understanding is that it was renamed or rebranded to Strawberry which itself nebulous marketting maybe it’s the new larger model or maybe it’s GPT-5 or maybe…

it’s all smoke and mirrors. I think my point is, they made some cost optimizations and mostly moved around things that existed, and they’ll keep doing that.

OH

I first saw this then later saw the “openai employees tweeted 🍓” and thought the latter was them being cheeky dipshits about the former. admittedly I didn’t look deeper (because ugh)

but this is even more hilarious and dumb

I’m not seeing a Strawberry announcement either.

I mean, if you play on the doom to hype yourself, dealing with employees that take that seriously feel like a deserved outcome.

yall might want to take notice of this thing https://discuss.tchncs.de/post/20460779

https://en.wikipedia.org/wiki/Wikipedia:Wikipedia_Signpost/2024-08-14/Recent_research

STORM: AI agents role-play as “Wikipedia editors” and “experts” to create Wikipedia-like articles, a more sophisticated effort than previous auto-generation systems

ai slop in extruded text form, now longer and worse! and burns extra square kilometers of rainforest

literally why would you do this. you can research anything you stupid bastards why would you make this

People out there acting like “research” using LLMs is ethical

LLM, tell me the most obviously persuasive sort of science devoid of context. Historically, that’s been super helpful so let’s do more of that.

we propose the STORM paradigm for the Synthesis of Topic Outlines through Retrieval and Multi-perspective Question Asking

oh come the fuck on

The authors hail from Monica S. Lam’s group at Stanford, which has also published several other papers involving LLMs and Wikimedia projects since 2023 (see our previous coverage: WikiChat, “the first few-shot LLM-based chatbot that almost never hallucinates” – a paper that received the Wikimedia Foundation’s “Research Award of the Year” some weeks ago).

from the same minds as STOTRMPQA comes: we constructed this LLM so it won’t generate a response unless similar text appears in the Wikipedia corpus and now it almost never entirely fucks up. award-winning!

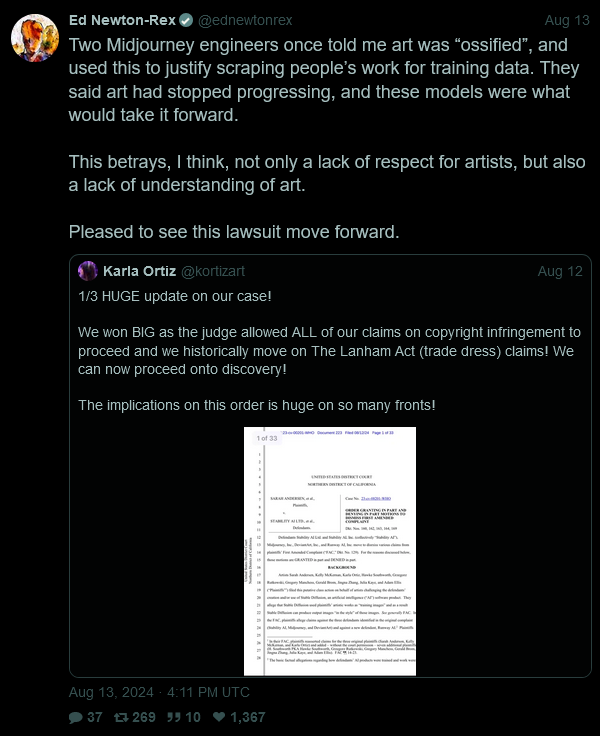

Picked up an oddly good sneer from a gen-AI CEO, of all people (thanks to @ai_shame for catching it):

jesus, that’s telling. and I can 100% see that sentence forming in the heads of the types of people who fall over themselves to create something like these tools. so caught up in the math and the technical cool, they can’t appreciate other beauty

Capitalist blames lazy workers for not putting in the hours to make themselves obsolete.

WSJ: Eric Schmidt Says Google Is Falling Behind on AI—And Remote Work Is Why

Archive: https://archive.is/JXvtV

Eric Schmidt Says Google Is Falling Behind on AI—And Remote Work Is Why

That’s another great benefit of remote work, then.

Also imagine …

work-life balance […] was more important than winning

… saying this unironically.

I always want to point out how there are never specific metrics attached to these criticisms. Whenever I’ve seen actual numbers checked there doesn’t appear to be a significant impact between before and after companies started WFH during the pandemic.

Of course I also haven’t looked to close because I’ve been too busy enjoying my life rather than pretending my boss is funny at a water cooler.

So one one hand the CEO’s want their minions back into office and on the other they want to replace them with AI’s?

Sounds like a conundrum. Or a business opportunity!

Presenting Srvile! The brand new Servility as a Service company, with AI powered robots that will laugh at all boss jokes at the water cooler and say things like “That is such a great idea boss! Since I am an AI I can’t realise that you are just regurgitating what you read on Xshitter!” and “We certainly need more AI to solve any problem!”

Call now to order!

(AI may at times be enhanced by remote human control for “quality control”. Actual level of servility may vary and is not guaranteed.)

Referencing this discussion about digital stamps in the prev megathread, you can get them in Sweden too:

let’s see how things are going on twitter

the original is one of the new approach from the Dimes Square nazis, isn’t it?

In another forum I’m in, someone posted this article and asked if someone, anyone could understand it. I kept schtum.

Haela Hunt-Hendrix, the singer from the black metal band Litvrgy, was one of the principal organizers of this “symposium.” […] Besides making music, she seems to be interested in esoteric religious themes, numerology, and Orthodox iconography. In any case, Hunt-Hendrix claimed that Anna was stealing her ideas and twisting them in a “cryptofascist” manner.

Oh no! How could she!

Oh my god I only now notice the title is a reference to Tiqqun’s Preliminary Materials For a Theory of the Young-Girl, this is like a supercollision of dumbfuck cryptoreactionary nonsense I’ve obsessed over.

In particular she felt like Anna, whom she’d been closest with, was being dishonest about what they hoped to achieve with this whole project. Sanje further alleged that Anna’s good standing largely stemmed from her incomprehensibility, because people don’t have a clue what this is actually all about. Possibly Anna doesn’t, either.

most straightforward hegelian

jfc what did I just spend 20m reading

I got as far as “Dimes Square bohemians” in the fourth sentence before realizing that everything in that article I recognized, I would regret.

Hegelian e-girls’ VIP “symposium”

Excuse the rather formal philosophical latin but qvid in fvck?

I tried looking through the post to find out what possibly they could have to do with Hegel and found

Thankfully, Matthew shared the Googledoc the e-girls had sent him with their prepared remarks. My commentary over the next several paragraphs will only make sense if you read over them (they’re mercifully short), so I’d urge everyone to open up the hyperlink and give it a quick look.

Okay, first of all, it’s like 5 pages, “mercifully short” lol, go take a hike. Second,

Concrete philosophizing means applying insight to the alchemical transformation of everyday life.

This is in the first paragraph. I feel like reading this would make me devolve into an entire day of incoherent screaming and I have enough respect for my coworkers and loved ones to not subject them to that

“Inexplicable Cimmerian Vibes” is the name of my next band.

Bonus points if this turns out to be the output of an LLM trained by phrenologists.

“Inexplicable Cimmerian Vibes”

But all the band members have the Homer Simpson bodytype. Sadly Okilly Dokilly stopped.

omg, next time my wife asks me how she looks, I’m definitely dropping that “legible magyar admixture”

“Babe, you’re looking Haplogroup I-M437 tonight. No. Not M-437. Damn, girl, you’re an M-438.”

When you really want to confuse your astronomy post-doc partner.

EDIT: I’ve been reliably informed that that’s too many Messier objects.

That’s certainly one approach to commenting on someone’s picture. Pretty sure it’s better to stick with the standard “Wow! 😍😍😍” but this certainly sticks out from the crowd?

friend looked at this and said “ah race science geoguesser guy”

Genomeguesser

Tangentially related. In 1878, some asshole exhumed 25 human skulls from an abandoned cemetery in far Northern Sweden and took them to Helsinki, where he and his buddies measured them to “prove” that people from the region were less advanced.

Now the skulls are finally being re-interred thanks to pressure from the local community.

Here’s a background on the cemetery and the skull-snatching (in Swedish):

https://kulturmiljonorrbotten.com/2023/09/29/akamella-odekyrkogard/

Babe, new AI doom vector just dropped: AGI will corrupt knitting sites so crafters make Langford visual hack patterns![1]

https://www.zdnet.com/article/how-ai-scams-are-infiltrating-the-knitting-and-crochet-world/

[1] doom scenario is my interpretation, not actually included in ZDnet article.

Sadly, Langford hacks seem to have never achieved memetic takeoff. Having an internet legally enforced on pain of death to be text-only would probably be a good thing.

Jimmy Buffet fans in shambles.

Who had Trump accusing the Harris campaign of using AI to inflate crowd size photos on their Election ‘24 bingo card? Anyway, I’m sure that being associated with fraud and fakes is Good For AI.

it going co-evolution on the same path as cybercoins did is chefskiss.bmp

In other news, someone caught a former NFT artist using AI. Not shocked in the slightest an NFT grifter jumped on the AI train.

The NFT “art” was already terrible procedurally-generated slop, so it fits the use case 100%.