A new ripoff of an old classic

Is it a ripoff if they credit the original?

Are you implying that the credit is here? If so, where? I am not seeing it.

Bottom right.

Lower right corner.

Thanks!:-)

Shocked the title of the original isn’t Little Bobby Tables.

I honestly didn’t notice that - it was a bit small and pixelated, good catch

Bottom right

if they make it almost exactly the same and “credit” it in the smallest font possible and didn’t get permission from the original author… i would say that’s definitely a ripoff

It’s a parody

How is this a parody? It’s not poking fun at the original or pointing out its flaws.

quoth wikipedia: “A parody is a creative work designed to imitate, comment on, and/or mock its subject by means of satirical or ironic imitation.” … “The literary theorist Linda Hutcheon said ‘parody … is imitation, not always at the expense of the parodied text.’”

yeah, and that’s not a parody.

It’s not a parody. It’s a homage

it’s not a hoe-midge, it’s an oh-marge

It’s not an oh-marge, it’s ho-mahg-ee.

its not a ho, Marge. Gee, she’s a real person.

It’s not a homage, it’s just the exact same joke.

But updated for our new hellscape!

didn’t get permission from the original author

Tell me you don’t know xkcd without saying you don’t know xkcd. These comics are licensed as CC-BY-NC 2.5, which means you are allowed to remix and use them, without explicitly asking for permission, as long as you attribute the original/author (which is given here) and as long as you do it non-commercially (which is given for this post IMHO).

Tell me you don’t know xkcd without saying you don’t know xkcd.

tell me you’re completely uncreative without telling me you’re completely uncreative.

the rest of what i said stands… but whooooa you “got me” i didn’t read the license on xkcd

This work is licensed under a Creative Commons Attribution-NonCommercial 2.5 License.

that’s not attribution.

In a version that doesn’t even fully make sense. With databases there is a well-defined way to sanitize your inputs so arbitrary commands can’t be run like in the xkcd comic. But with AI it’s not even clear how to avoid all of these kinds of problems, so the chiding at the end doesn’t really make sense. If anything the person should be saying “I hope you learned not to use AI for this”.

We’re evolving too!

Increasingly verbose

if someone is actually using ai to grade papers I’m gonna LITERALLY drink water

I have a colleague who is trying hard to do it, but it isn’t good enough yet fortunately. I point out as many issues as I can to deter him but it ain’t working.

Look up Texas’s STAAR writing tests

Imma do it this evening, so hydrate up, bud

I’m gonna literally drink water if they DON’T

I’m drinking water as we speak and none of you can stop me!

As a large languag model I do not drink water

HYDROHOMIES UNITE

I’m going to drink my water before you get to it!

breaks through window, wrestles cup out of your hands, stands over you, bleeding

drinks the blood.

NOW I HAVE YOUR WATER!!

weeps

immediately a Fremen begins to extoll about my water giving virtues

Always satanise your inputs.

Hail!

But that burns.

Always sedate your inlaws

How do you rip something off and make it worse?

They give credit bottom right.

Bobby’s son

It was in fact the mum who was good with computers. Bobby himself was never that interested in exploits.

He probably found it very hard to make any accounts on computers

The original xkcd comic was better and this is a blatant ripoff. I know it says it’s based off of that original xkcd comic, but it’s basically line for line the same. Just talking about genAI instead of Databases, which ended up making less sense and made the whole joke worse.

I think it’s a paraphrase of a culturally significant webcomic inserted into a more modern context without it’s original meaning being altered.

I don’t know if I’d call it a paraphrase when it’s using 90% the exact same words.

without it’s original meaning being altered.

I think you mean “without its original meaningfully being altered.”

it literally has a credit to the original, go touch grass and stop inventing things to get mad over

Didn’t know I was mad… tell me more about what I should do and how I should do it.

deleted by His Holiness

I don’t got energy for this negativity and harassment. So I deleted my comment rather than argue. You wanna bug me about it too and I’ll block you too. You aren’t worth the energy.

ah no sorry, I actually have no idea what you wrote - I just find the “deleted by creator” stuff I see so often super funny because of how biblical it sounds

I hope you have a better day than this one, and don’t let the mob get you down

content was sinful

It’s an old joke updated for new technology … that’s part of what makes it clever.

It references the original joke (albeit in very small text)

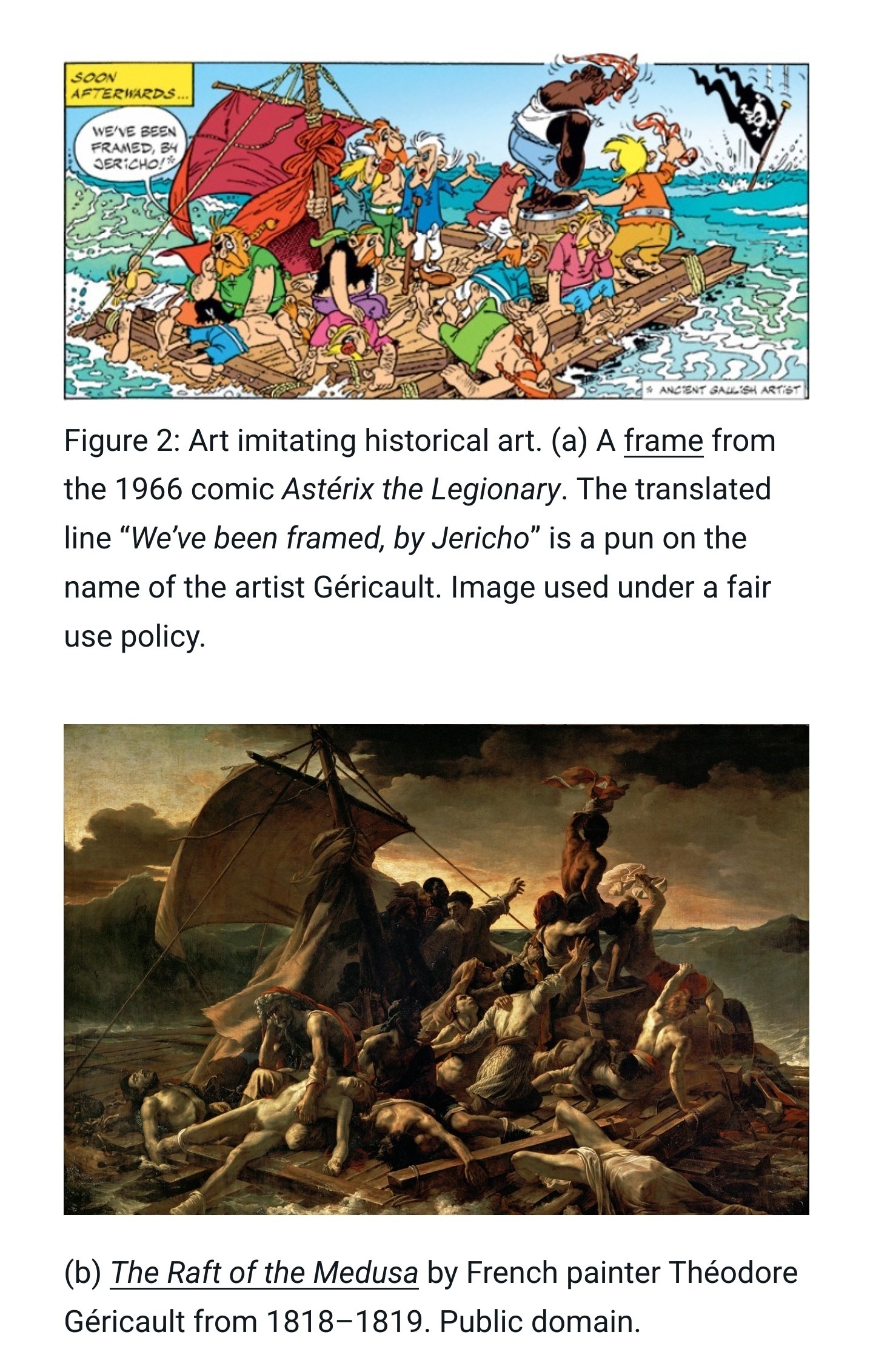

The Asterix books frequently did something similar. https://cloud.wordpress.com/2022/02/17/asterix-and-the-historical-interpretation/

Yikes. I’ve never read Asterix and Obelix, but did they really make (I assume) the only black character a straight up knuckle-dragging gorilla imitation? 😬

Cartoons back then were a little bit sambo so to speak, but the intent wasn’t strictly malicious, just uninformed.

You use the words/concepts you know to express something to an audience. If society tells you that native Americans wear headdresses, then you will likely add a headdress when introducing a new native american character, not neccesarily realising the damage of the stereotype behind it.

He’s possibly the only reoccurring black character, and yes it is very much a product of its time.

In defense of the authors the Gauls are all depicted with large bulbous noses, the Romans with Roman noses, etc; all cariceturs. https://en.m.wikipedia.org/wiki/Caricature.

In the attached image you can see Obelix is also depicted as a “knuckle dragger” (at times). The character leading them is a Roman.

This second example shows the Vikings.

The fact that it’s a joke about genAi and that joke is a rehash of existing material is rather on point though.

I… Didn’t think of it that way.

Artificial Idiocy

lil’ bobby generic

My gawds, some people need to learn what’s a homage and also stop being upset on behalf of others. This comic is fine, stop bellyaching. This is what terminal permission culture does to a motherfucker.

What is terminal permission if I may ask?

Permission culture is a term primarily criticizing copyright law. Something that I would expect db0 to agree with! 🏴☠️

Hrm, give me a moment to check the ACLs, I’ll be able to resolve all these complex conflicting rules shortly…

Nevermind, it was easier to just globally disable SeLinux so I did that. Your system should be more secure now.

Narky, but real, updoot.

Call Dr. Kevorkian and ask.

The only person who should care about anything other than the quality is Randall. However since he licensed it CC BY-NC 2.5 how he feels about it doesn’t really matter either.

I think people should be concerned about things on others’ behalfs. We all need to stick together.

This situation is a send-up though. Totally not a concern.

Oh definitely! I just meant in this particular case.

We can probably infer by the licensing that he’s cool with it.

More like “And I hope you learned not to trust the wellbeing and education of the children entrusted with you to a program that’s not capable of doing either.”

Well that would require too much work invested into stealing of https://xkcd.com/327/

It could be credibly called an homage if it had a new punchline, but methinks the creator didn’t know what “sanitize” meant in this context.

Stealing is a strong word considering it gives credit in the bottom right

Stealing in the sense that it’s the exact same joke.

It’s like a YouTuber creating a ‘reaction’ video that adds nothing but their face in the corner of the screen. Adding a link to the original video doesn’t suddenly make it reasonable.

I think it’s more equivalent to someone making a meme of a standup routine and changing text in order to make fun of something else. The original was a joke about general data sanitization circa 2007, this one is about the dangers of using unfiltered, unreviewed content for AI training.

Except this “routine” is word for word clone. It is more like people retelling the same political joke with only difference being the politician’s name… No one calls it new joke, or “homage”. We call it “yes, this joke was given to Moses on stone tablet” 😊

Its a MEH update on little bobby tables. Who is in his twenties now.

It’s his younger brother Williams, tho.

One of the best things ever about LLMs is how you can give them absolute bullshit textual garbage and they can parse it with a huge level of accuracy.

Some random chunks of html tables, output a csv and convert those values from imperial to metric.

Fragments of a python script and ask it to finish the function and create a readme to explain the purpose of the function. And while it’s at it recreate the missing functions.

Copy paste of a multilingual website with tons of formatting and spelling errors. Ask it to fix it. Boom done.

Of course, the problem here is that developers can no longer clean their inputs as well and are encouraged to send that crappy input straight along to the LLM for processing.

There’s definitely going to be a whole new wave of injection style attacks where people figure out how to reverse engineer AI company magic.

The funny thing about a comic is, you are able to express the idea without writing multiple paragraphs of words.

As a daily reader of SMBC, I can confidently tell you this rule is a suggestion at best.